In the area I live, there are often kangaroos that jump in front of traffic, often from blind spots, and can get themselves into harm's way. I wanted to build a low-cost solution that can alert drivers to kangaroos in these blind spots to reduce the chance of a collision.

The system works by:

- A Raspberry Pi with a camera, monitoring an area

- Using Tensorflow Lite the Pi camera images are processed and the inference engine gives a confidence level a kangaroo is present in the frame

- If there is a sighting, save the photo to cloud storage and send a POST request to a server

I wanted to take the opportunity to learn more about machine learning and deploying low-cost serverless applications.

The hardware components of the build are:

- A Raspberry Pi 3A+

- A battery backup and charging circuit (PiJuice HAT)

- Raspberry Pi camera (The night vision variant was used as kangaroos are most active at dusk)

- A waterproof container to house the electronics

- Solar panel for charging

- Cabling, terminators, etc

The software components of the build are:

- The Python 3 application running on the raspberry pi:

- To stream the camera data and run inference on the images

- Monitor the PiJuice HAT and post telemetry

- A serverless stack in CDK (AWS infrastructure as code library)

Kangaroo Inference

Now to proof out the kangaroo detection, and the solution needed to be low power and runnable on a raspberry pi from a battery. The solution was TensorFlow Lite which has a large community, and if necessary can take advantage of hardware-accelerated Tensor Processing Units to speed up inference and reduce the power required.

The edge detection code is hosted on my repo here

In order to set up the repository, you will also need to download an existing TensorFlow model that can detect what you’re looking for, in my case I was lucky that the mobilenet_v1_1.0_224 model has trained on inferring kangaroos.

I was going to go with Python 3 asyncio for the event pump however the AWS library boto3 did not yet support asyncio, so settled on using threads for monitoring the PiJuice and capturing images for inference.

To start the code run:

python3 start.py

You’ll need the requirements installed globally, such as TensorFlow Lite 2, exif, requests.

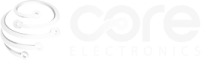

Once the project starts up it captures images in 1080p and scans the image for the inference target, in my case kangaroos. The first iteration of the camera worker would capture the 1080p image, resize it to the TensorFlow model shape, and then do a single inference on the whole image. This worked OK for testing close up photos from my monitor:

Now that it was proven at a basic level, I assembled the raspberry Pi, piJuice, and camera inside a waterproof enclosure and drilled a panel mount power input into the bottom so I could leave the device out in the weather with confidence. A regulated 5V solar panel was used to power the device during the day

Now that it was set up with the PiJuice, I needed to configure the Raspberry Pi to wake up when the PiJuice was suitably charged by the solar panel, and go to sleep prior to full battery drain. Luckily PiJuice has some great software for this, and it was as straightforward as installing their GUI:

sudo apt-get install pijuice-gui

And then setting the system task to wake up the device at 20% charge, and halt at 5% charge. I left a bit of hysteresis to avoid excessive sleep/wake cycles if there were poor charging conditions.

Cloud stack

In order to monitor the system from anywhere with an internet connection, without provisioning dedicated servers, I used an event-driven stack as shown below.

This stack was deployed using the AWS CDK library which allows writing cloud resources as code. The API gateway code and website were also part of the codebase written as a monorepo. The code is hosted here:

https://github.com/bpkneale/roo-api

The stack can be deployed as per the readme, using the CDK tool. You will need an AWS account.

Deployment

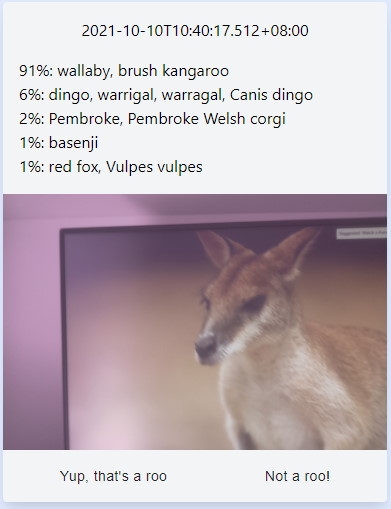

But when it is set up in a real-world scenario I found the model struggled to find kangaroos in a wider field. During some real-world testing, I needed to change the inference to scan the whole field of view in chunks the size of the model shape (around 240px by 240px) with some overlap to look for kangaroos. This worked out quite nicely, as can see by the highlighted inference result looking over a field with kangaroos.

This project is currently hosted at https://roo.ben-k.dev

Future work

Some outstanding issues and planned future work includes:

- Changing the model to use a greyscale input, as this would be more appropriate for low light and UV conditions

- Using the web interface to allow the feedback of “yes/no” it contains a kangaroo to improve the inference model

- Pairing this device with a low power radio (such as XBee or similar) to communicate to a warning system for oncoming traffic